Rethinking voice AI at the edge: A practical offline pipeline on DGX Spark

How Arm CPUs and open source enable real-time, privacy-preserving voice conversations with faster-whisper and vLLM

By Odin Shen

1. Summary

Cloud-based AI dominates the headlines, but responsive and private interaction lies at the edge. This blog post shows how to build a fully offline, real-time voice assistant using the Arm-based NVIDIA DGX Spark platform. The system integrates open-source components such as faster-whisper and vLLM. It delivers low-latency, human-like dialogue without sending data outside the local environment.

Get started now. You can find the complete example and step-by-step instructions from this blog post on the Arm Learning Path.

2. The technical challenge: The cloud dependency trap

In many enterprise environments, technical staff need fast access to internal documents or real-time assistance. However, relying on cloud APIs introduces three critical bottlenecks:

- Latency: Round-trip delays to cloud APIs disrupt the natural flow of voice conversations.

- Privacy: Sending sensitive data to external servers introduces compliance risks, especially for proprietary content.

- Cost and control: API-based pricing and usage limits restrict scaling and customization for specific industry needs.

3. The solution: A heterogeneous, opensource pipeline

To solve this, we have designed a pipeline on the DGX Spark, which is built on the Grace-Blackwell GB10 architecture. The pipeline treats the CPU as an active, latency-optimized engine.

The software stack

To ensure maximum flexibility and performance, the entire system runs using the following open-source tools:

| Component | Software / Model | Software / Model | License / Accessibility |

| Audio Capture | PyAudio | Real-time 16 kHz microphone streaming. | MIT License |

| Speech Detection | WebRTC VAD | 30 ms frame‑based voice/silence detection. | BSD‑style |

| Speech‑to‑Text | faster‑whisper | Efficient, high‑quality transcription on Arm CPU. | MIT License |

| Inference Engine | vLLM | GPU‑accelerated LLM serving with quantized model support. | Apache‑2.0 |

| Language Model | Mistral‑7B-Instruct / Llama-3-70B (GPTQ) | Local reasoning and natural language response. | HF Model License / Model Card Terms |

4. Key components and system design

4.1 Real-time audio and voice activity detection

The system captures 16 kHz mono audio and uses WebRTC voice activity detection (VAD) to detect speech in 30ms frames. This approach ensures that we process only valid utterances and ignore background noise and gaps.

4.2 High-speed transcription on the Arm CPU

Instead of offloading short, latency-sensitive tasks to the GPU, we use the high-performance Arm CPU complex (the Cortex-X and A cores).

- Why Arm: The Arm architecture is optimized for latency-critical workloads such as real-time search and small-batch inference.

- Performance: Running faster-whisper on Arm CPUs meets the low-latency requirement, with transcription times of about 70 to 90 milliseconds for interactive systems.

4.3 GPU-accelerated reasoning (vLLM)

After transcription, the text moves into vLLM. DGX Spark uses Unified Memory so the CPU and GPU share a single memory space. This design lets the GPU directly access CPU output and removes the need for explicit data transfers or PCIe copy overhead.

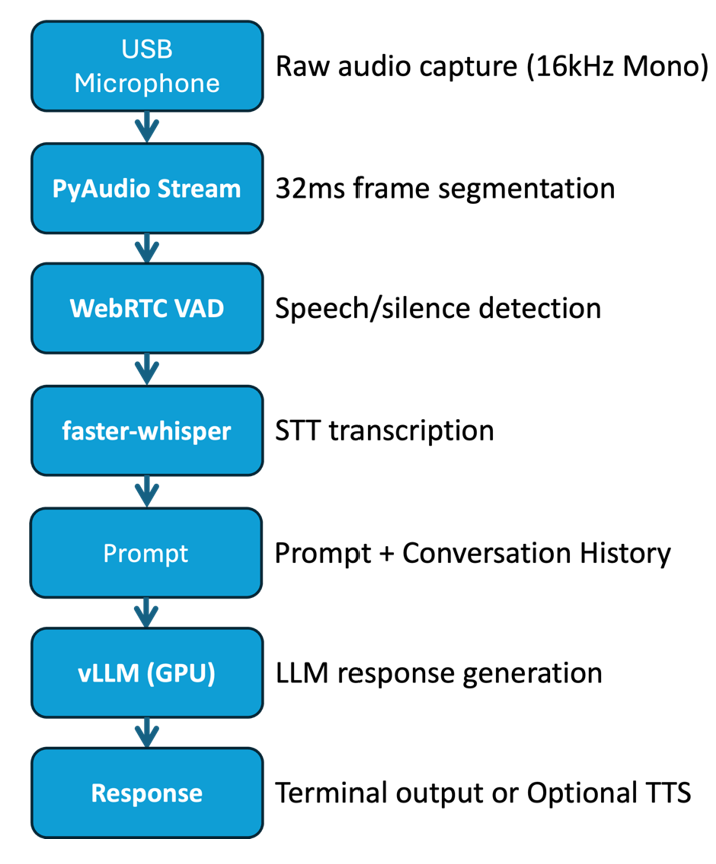

5. System architecture flowchart

This flowchart illustrates a high-performance heterogeneous pipeline on DGX Spark. Tasks are allocated to the most efficient compute units to reduce latency.

This pipeline uses Arm Cortex-X and A- CPU cores to handle latency-sensitive audio capture and speech-to-text transcription. This approach delivers response times below 100ms. The system uses Unified Memory so the GPU can directly access transcribed data in shared DRAM. This removes traditional PCIe transfer overhead. The process ends with the NVIDIA GPU executing the vLLM engine to generate intelligent responses. This delivers a high-throughput and private conversational experience.

Figure 1: DGX Spark Heterogeneous Pipeline. Arm CPUs handle STT transcription and GPU generate the response to minimize interaction latency.

6. Demo: Local voice interaction and performance metrics

We validated the system using a multi-turn subscription cancellation scenario. The system produced verified, grounded answers without hallucinations.

Performance metrics: Latency breakdown

The following data tracks the exact time from the end of the user's speech to the start of the LLM's response (response latency).

| Dialogue Turn | Speech End Time | vLLM Response Start | Response Latency (s) |

| Turn 1 | 00:10 | 00:13 | 3 seconds |

| Turn 2 | 00:24 | 00:28 | 4 seconds |

| Turn 3 | 00:41 | 00:45 | 4 seconds |

| Turn 4 | 00:54 | 00:59 | 5 seconds |

Observation: All turns achieved an average response latency of four seconds. This performance is competitive with cloud-based solutions and provides stronger privacy with no connectivity requirements.

7. Ready to build your own?

We believe the best way to understand the power of Arm-based AI is to experience it firsthand. We have prepared a comprehensive, step-by-step Learning Path that helps you deploy this pipeline.

The tutorial shows you how to:

1. Configure professional-grade microphone capture for edge devices.

2. Optimize faster-whisper for the Arm Cortex-X architecture.

3. Deploy vLLM with quantized models to maximize local throughput.

Build an offline voice chatbot with faster-whisper and vLLM on DGX Spark Learning Path

By Odin Shen

Re-use is only permitted for informational and non-commercial or personal use only.