Start Developing Edge AI on Arm

Get hands-on with edge AI development on Arm. This quick start guide helps you develop, test, and deploy AI workloads on both high-performance Embedded Linux devices and ultra-efficient Cortex-M microcontrollers.

Choose Your Platform

Embedded Linux with Cortex-A

For higher-performance edge devices.

Microcontrollers with Cortex-M

For ultra-constrained edge devices.

Embedded Linux with Cortex-A

For higher-performance edge devices, this path lets you explore more complex applications on Embedded Linux platforms. Start directly on your laptop or desktop, then deploy the same code to your target hardware.

Quick Examples — Get Started in Minutes

Edge AI runs entirely on your device at deployment—no internet needed, no privacy concerns, no API costs. Pick an example to get started:

Microcontrollers with Cortex-M and Ethos-U

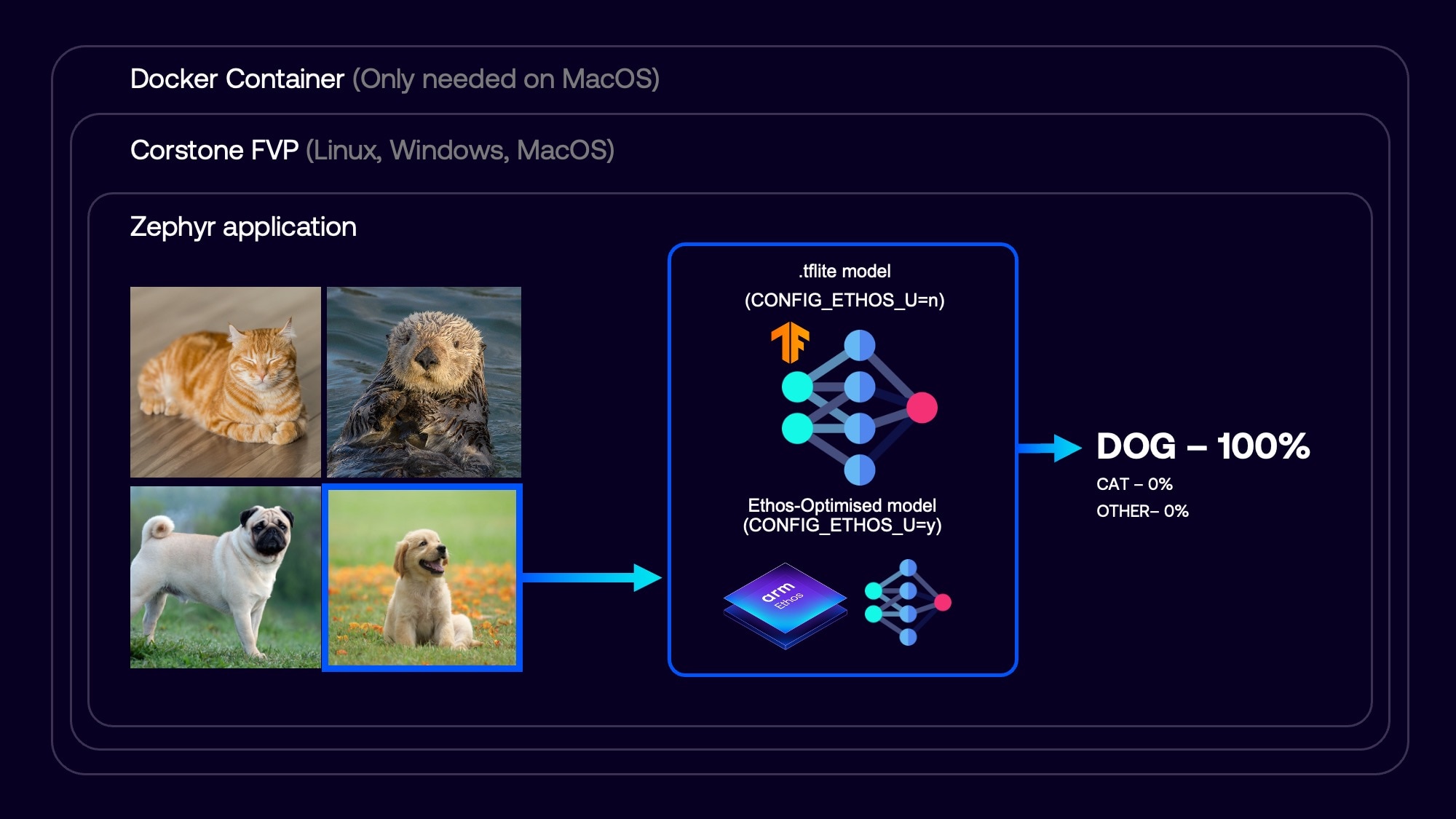

For microcontroller-class devices, this project demonstrates how to run efficient, real-time AI workloads with Zephyr RTOS, Arm Corstone-300 reference system, and LiteRT Micro, packaged with Docker for a consistent setup.

This sample demonstrates edge AI on microcontrollers using a MobileNetV2 model to classify images of cats, dogs, and other animals with LiteRT Micro. Everything runs on the Arm Corstone-300 FVP (Fixed Virtual Platform) simulator – no physical hardware required.

Follow the instructions in the README to set up your environment and run the animal-classification example.

Take the next steps in your journey building, optimizing, and deploying AI on Cortex-M and Ethos-U with this developer launchpad.

Summary

In this quick start, you explored the essentials of running edge AI across different classes of devices:

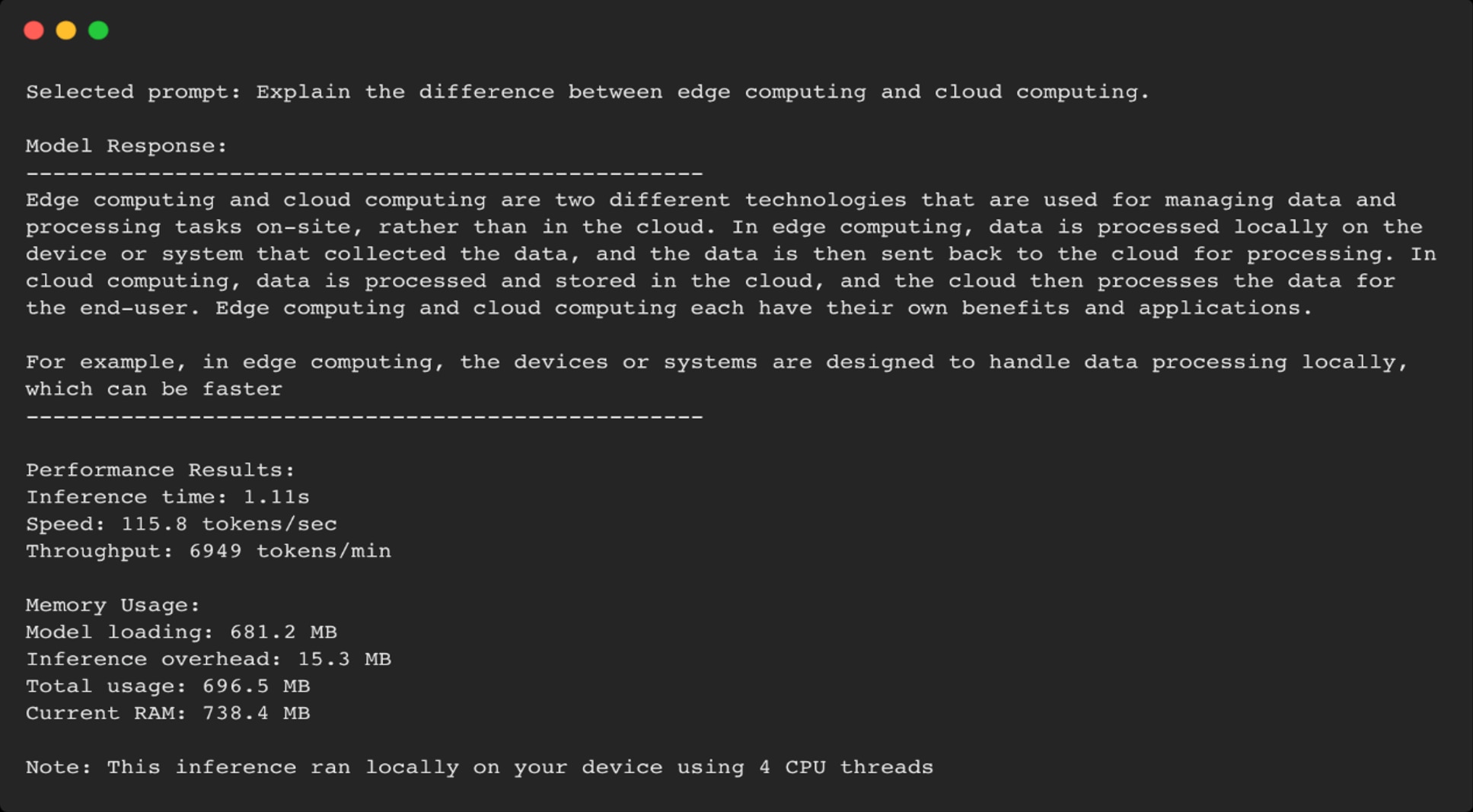

- Developer machine prototyping: Quickly test an edge AI application and its integration before moving to target hardware.

- Model trade-offs: See how model size influences accuracy, inference speed, and memory usage.

- Optimization benefits: Understand how methods like quantization can significantly reduce model size while balancing performance.

- MCU deployment: Run edge AI on a microcontroller with an RTOS like Zephyr, and simulate execution using Arm Corstone-300 before flashing to real hardware.

Want to see more examples of Edge AI applications? Explore them here.