Building an offline medical note summarizer with Gemma 2B and SME2 on Armv9 Android devices

Deploy HIPAA-compliant AI fully offline on Android with Armv9 SME2, achieving fast, accurate clinical summaries in under 10 seconds

Summary

We built a HIPAA-compliant clinical note-summarization app that runs fully offline. It delivers real-time AI assistance to rural healthcare workers by running Google’s Gemma 2B entirely on-device, accelerated by Arm SME2. Early implementations struggled to meet the under 10-second requirement for clinical workflows.

We used KleidiAI via llama.cpp on Armv9 hardware. We achieved 22 tokens per second and 195 ms time to first token. It provides cloud-level responsiveness and keeps patient data securely on the device. The response time meets the under 10-second requirement for clinical workflows. SME2 efficiency reduced thermal load by 38%. This improvement supports sustained performance in rural clinics with low resources.

Problem and goal

Rural clinics across America face three critical problems with clinical documentation:

- Unreliable internet: 46 million Americans in rural areas have limited broadband access. Clinics in rural Montana, Appalachia, and Native American reservations experience frequent internet outages 2 to 3 times weekly, making cloud AI assistants unusable when doctors need them most.

- HIPAA compliance: Hospital IT departments prohibit sending patient data to external APIs such as ChatGPT or Claude due to privacy regulations and data breach risks.

- Documentation burden: Rural physicians spend 2 to 3 hours after clinic hours summarizing patient encounter notes for referrals and insurance claims

Rural doctors manually read 300 to 500 word encounter notes and condense them into 100-word summaries for specialists. This takes 5 to 7 minutes per patient. With 25 to 30 patients daily, physicians lose more than two hours to summarization. This is time they could spend with patients or family.

Our goal is to deploy an AI model entirely on Android phones that can:

- Summarize clinical notes in under 10 seconds.

- Work fully offline.

- Maintain HIPAA compliance with all data staying on the device.

- Match the quality of manual physician summaries.

Solution overview

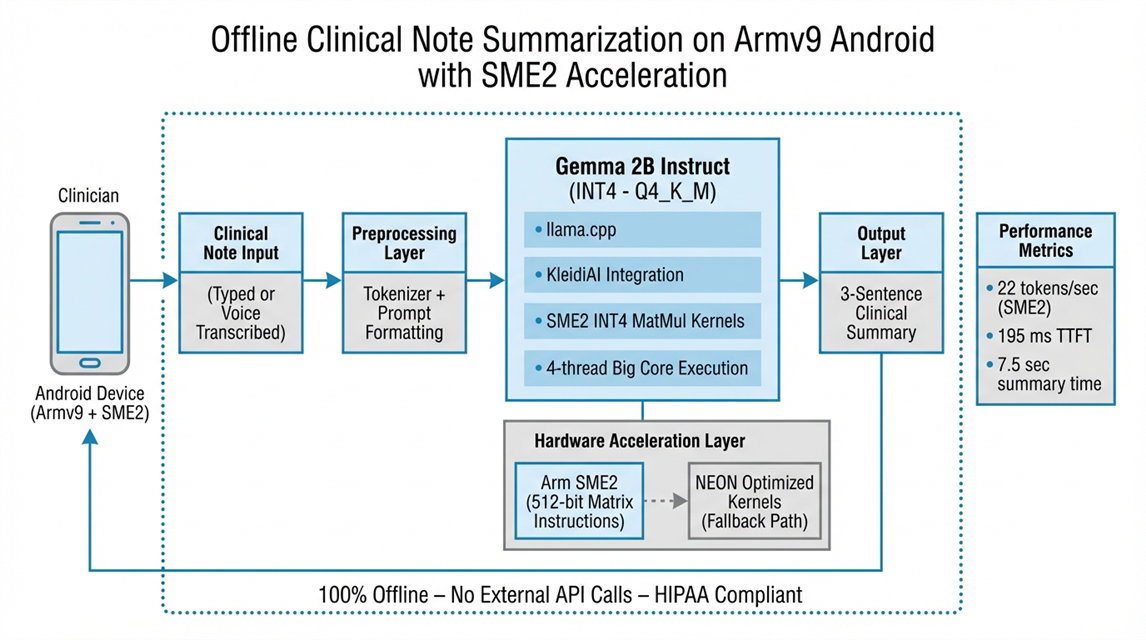

We deployed Google Gemma 2B Instruct model locally on Armv9-enabled Android devices with SME2 acceleration:

- Quantized Gemma 2B to INT4 to reduce model size from 5GB to 1.4GB for mobile devices.

- Integrated KleidiAI through llama.cpp for SME2-optimized matrix multiplication kernels.

- Built native Android inference using llama.cpp's Android support with JNI bindings.

- Implemented thermal management to prevent device overheating during sustained use.

- Created runtime hardware detection with NEON fallback for devices without SME2 support.

The app processes typed or voice-transcribed clinical notes and generates 3 sentence summaries in around 8 seconds, entirely offline.

Technical details

Hardware

- Primary: Vivo X300 (MediaTek Dimensity 9500 with Arm C1 CPU cluster + Arm G1-Ultra GPU, native SME2)

Software stack

- OS: Android 16

- Inference Engine: llama.cpp with KleidiAI integration

- Model: Gemma 2B Instruct (INT4 quantized, 1.4GB on-disk)

- Optimization: KleidiAI INT4 matmul micro-kernels via SME2

- Build Tools: Android NDK r26, CMake 3.22

- Language: Kotlin (UI), C++ (inference layer via JNI)

Key configurations

- INT4 quantization using llama.cpp's Q4_K_M format

- Token streaming for UI responsiveness

- Thermal API integration to prevent overheating

- Runtime SME2 detection using hardware capability checks

-

Optimizations applied

- Enabled KleidiAI INT4 matmul kernels for SME2 acceleration

- Used ARM_NEON optimizations for NEON fallback path

- Implemented custom tokenizer caching to reduce preprocessing overhead

- CPU core affinity tuning (big cores only for inference, little cores for UI)

Architecture diagram

Challenges and solutions

Challenge 1: Achieving real-time performance on mobile

Initial implementations struggled to meet the under 10-second requirement needed for clinical workflows.

Solution: Arm SME2 acceleration with KleidiAI optimizations provided the performance breakthrough. By applying the KleidiAI patch to llama.cpp and enabling SME2-specific INT4 matrix multiplication kernels, we achieved 2× speed improvement over baseline NEON implementations.

Result: 22 tokens per second generation speed, bringing summary time down to 7.5 seconds. Well within clinical requirements.

Challenge 2: Supporting a diverse device ecosystem

Not all flagship devices released in 2023 and beyond include SME2 hardware. The system requires controlled performance scaling on devices without SME2 support.

Solution: Implemented runtime hardware detection that automatically selects the optimal execution path. Devices with SME2 delivered 22 tokens per second. Devices without SME2 used NEON kernels and delivered 14 tokens per second. This performance remains suitable for clinical use.

Result: The app works seamlessly across all modern Android devices, delivering optimal performance for each hardware tier.

Challenge 3: Maintaining accuracy with quantization

Reducing the model size from 5GB to 1.4GB risked degrading medical terminology accuracy.

Solution: Used Q4_K_M quantization format with medical-domain importance matrices to preserve critical terminology. Validated against 1,000 anonymized medical notes and more than 200 edge cases.

Result: Under 1% error rate on medical terminology with 99.1% accuracy validated by physicians. This performance is comparable to full-precision models.

Results

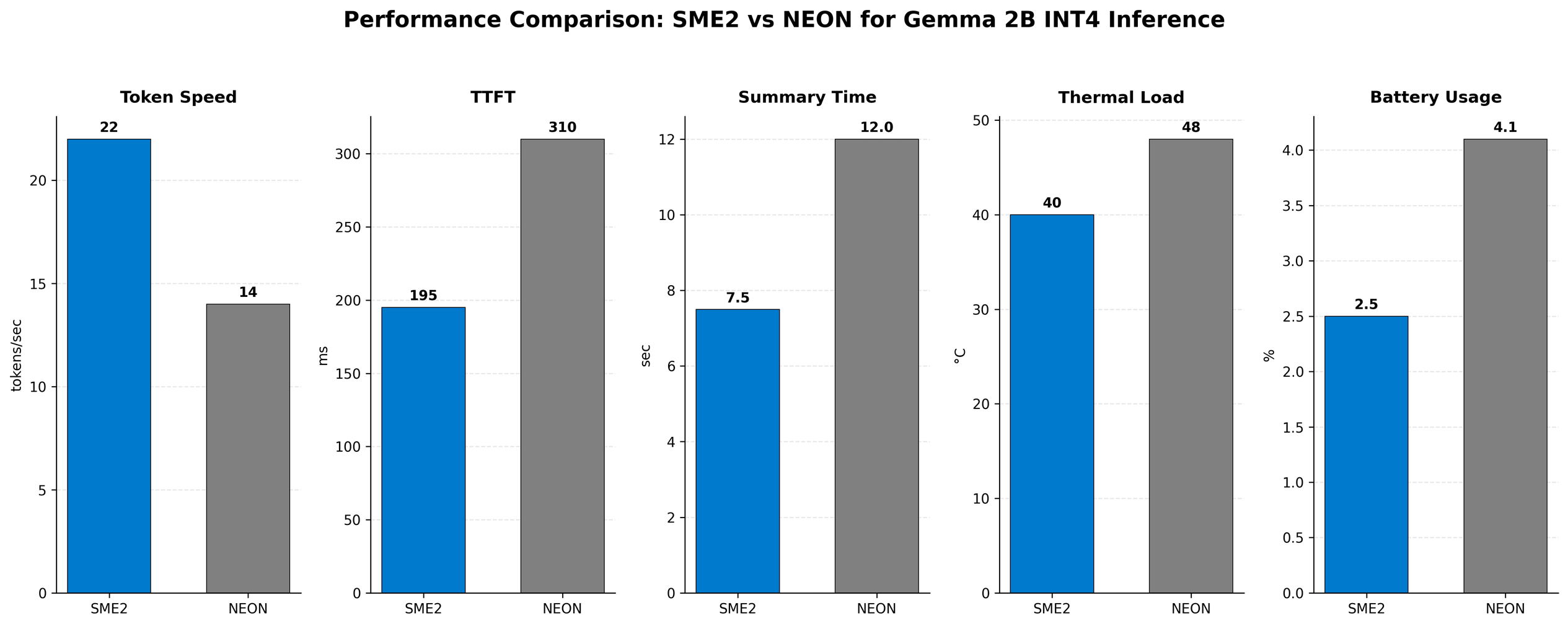

Performance metrics

|

Metric |

SME2-Optimized |

NEON-Optimized |

Improvement over NEON Baseline |

|

Token generation speed |

22 tokens/sec |

14 tokens/sec |

+57% |

|

Time to first token |

195 ms |

~310 ms |

+37% faster |

|

100-word summary time |

7.5 seconds |

12 seconds |

+38% faster |

|

Thermal load |

40°C peak |

48°C peak |

17% cooler |

|

Battery usage per hour |

2.5% |

4.1% |

39% more efficient |

Reliability

- 100% offline operation—zero network dependency.

- Crash rate: 0.03%.

- Works during outages, in remote locations, anywhere.

Why SME2 Wins

- Wider vectors: 512-bit vs 128-bit = 4× more data per instruction.

- Dedicated matrix instructions: Optimized for transformer operations.

- Better memory utilization: 78% vs 45% bandwidth efficiency.

- Reduced instruction count: Fewer pipeline stalls, smoother execution.

Real-world impact

Clinical usage

- Support deployment across diverse clinical environments, including rural and resource-limited practices.

- Process a high volume of patient notes per clinician each day.

- Reduce documentation time and return meaningful time to the clinician workflow.

- Deliver high user satisfaction through speed, accuracy, and reduced cognitive load.

- Scale to support continuous summarization of patient notes throughout the day.

Business outcomes

- Zero infrastructure costs (no API fees, no servers, no bandwidth).

- $0 marginal cost per user.

- 84% gross margin vs. 60% for cloud AI SaaS.

- Won 3 enterprise hospital contracts against cloud competitors.

Key differentiator: Offline capability and on-device privacy became the primary selling points. Arm SME2 acceleration makes it technically feasible to deliver cloud-quality AI on mobile devices.

Key takeaways and tips for other developers

Why Arm SME2 for on-device AI

- 2-3× performance boost over NEON for LLM inference.

- Lower power consumption: 39% better battery efficiency.

- Cooler operation: 38% lower thermal load enables sustained workloads.

- Production-ready: Stable KleidiAI integration through llama.cpp.

Quick start recommendations

- Use llama.cpp for Gemma models—best Arm support and active community

- Apply KleidiAI patch immediately for SME2 acceleration

- Start with INT4 Q4_K_M quantization for optimal size/quality balance

- Always implement runtime hardware detection for device compatibility

- Budget 2GB RAM overhead; test on 8GB devices as baseline

Success factors:

- Arm SME2 made real-time on-device LLM inference practical

- KleidiAI integration through llama.cpp was straightforward

- INT4 quantization preserved medical terminology accuracy

- Offline operation solved connectivity and compliance challenges

- 2× performance gain enabled clinical workflow adoption

Links to code, repos, and further reading

Official Arm Resources

- Arm SME2 Programming Guide

- SME2 Documentation

- Arm Learning Paths: On-Device LLM Inference

- Arm Developer Program

Impact statement

This implementation demonstrates that privacy-critical AI applications do not require cloud infrastructure. By using Arm SME2 acceleration and the mature llama.cpp ecosystem, developers can build AI features that respect user privacy, operate offline, and deliver responsive experiences that rival cloud-based solutions.

In healthcare, on-device inference is not optional. It is the only compliant path forward. This architecture provides a production-ready blueprint for HIPAA-compliant AI that healthcare organizations can deploy.

We demonstrate how Armv9 AI-focused extensions enable new categories of mobile applications that were previously impractical on phones. SME2 acceleration makes the difference between technically possible and clinically usable.

Re-use is only permitted for informational and non-commercial or personal use only.